One of the big benefits of a Cloud environment is the access to almost unlimited storage. Cloud storage comes in a variety of flavours, depending on your use case. Like any AWS service though, it’s up to you to make sure that you configure your storage to meet your security requirements. In this post, we are concentrating on security configuration for object storage (Amazon S3), block storage (Amazon EBS), database storage (as used by Amazon RDS) and network storage (Amazon EFS and FSx). Specifically, we will consider both encryption of data and managing access to it.

Encrypt, encrypt and encrypt

Dance like no-one is watching, encrypt like everyone is

Werner Vogels, CTO, Amazon

It’s really simple. Enable encryption at rest everywhere. It’s almost free in AWS and has no performance impact.

EBS

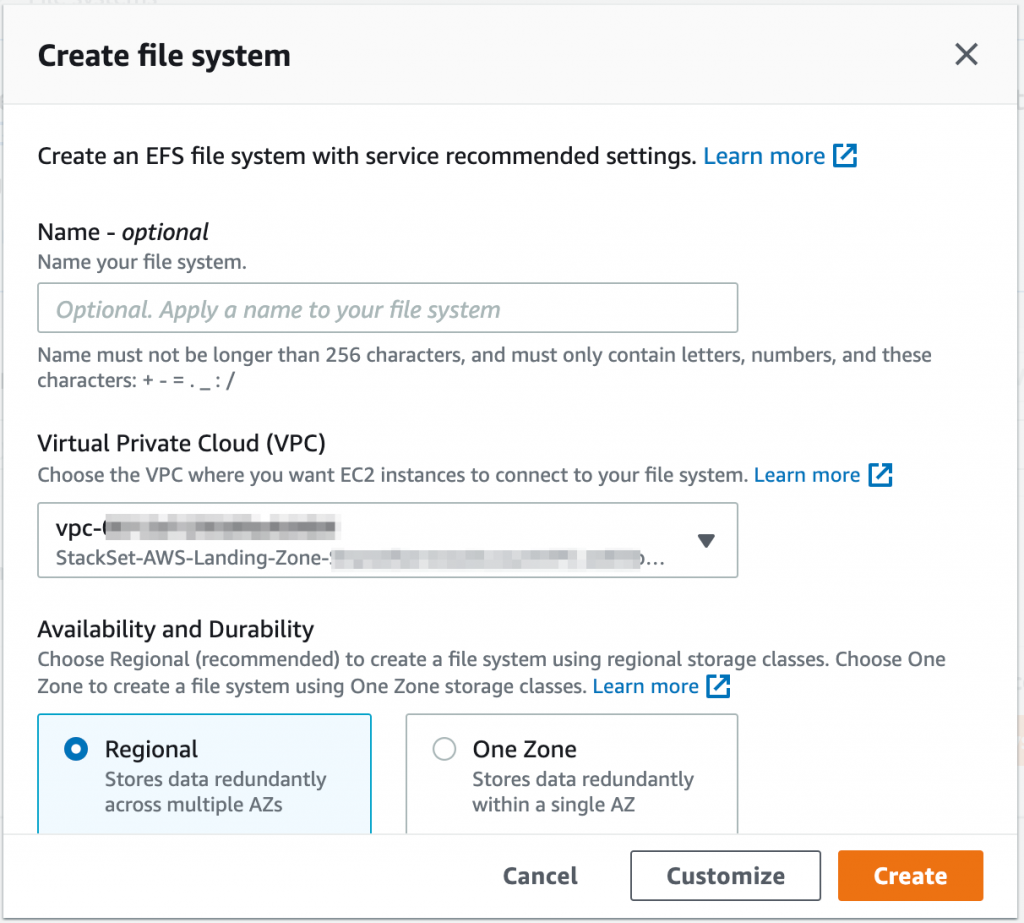

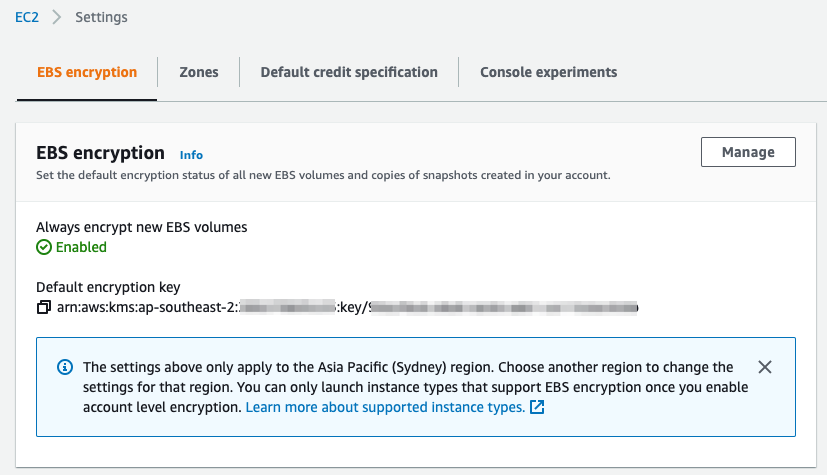

For EBS block storage, you can setup encryption by default. This means that all volumes that are created in the region will be encrypted!

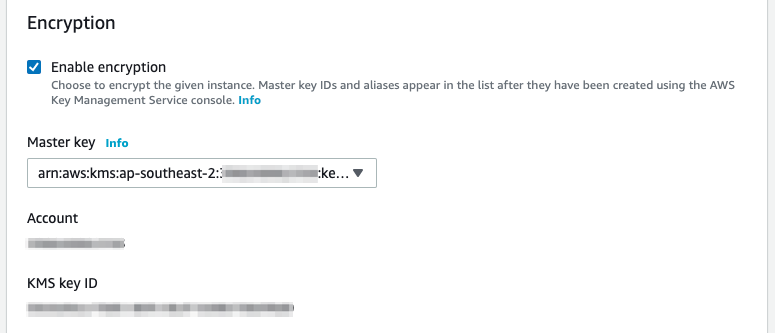

From the EC2 console, select EBS Encryption. Now, click on Manage. Finally, setup encryption by default ticking the Enable box and selecting an encryption key. You can use the default AWS managed KMS key for EBS or you can create your own. If you ever want to share volumes or snapshots with other accounts (more on that later), you should use a customer not AWS managed key.

Note that an AWS managed KMS key is still unique to your account. AWS managed keys are created, managed, and used on your behalf. There is no cost for an AWS managed key but it can only be used in that AWS account. A customer managed key will cost you $1 per key per month. In fact, we recommend always using customer managed KMS keys where they are supported. They give you far more control of use of the key (through the key policy) for a small cost.

Creating a customer managed key

To create a customer managed key, go to the KMS Console.

- Select Customer managed keys;

- Click on Create key;

- Select a Symmetric key;

- Give the key a name;

- Select the user(s) or role(s) who can administer this key. This should considered a very privileged action so chose carefully!

- Select the user(s) or role(s) who can use this key. This will provide access to encrypt and decrypt EBS volumes. Note that if you plan to use auto-scaling for EC2 instances, the EC2 auto-scaling service-linked role will need to give the role access to the custom key;

- Click on Finish to create the key.

You can now go back to the EC2 console to setup EBS encryption using the new key.

RDS

Encryption of Amazon’s Relational Database Service (RDS) is enabled when you create an RDS instance. RDS encryption encrypts the underlying storage (much like EBS above). However, you can’t enable it by default for all RDS instances. Note that for Oracle and SQL Server, you can also use Transparent Data Encryption which encrypts the data in the database which we aren’t covering here.

Much like EBS, you can use a default Amazon managed KMS key for RDS or a customer managed key that you create. You will find the encryption configuration under Additional configuration when you create an RDS instance in the console.

S3

S3 object storage is one of the most heavily use Cloud Storage services. Much like other storage services, encryption of S3 at rest is managed at the instance level, in this case an S3 bucket. To encrypt data in a bucket, you have two choices, client-side or server-side encryption.

With client-side encryption, the data is encrypted before uploading it to S3. The calling application or service needs to manage the encryption and decryption. Two options are provided for client-side encryption. A master key can be stored on the client side or on the server side (in KMS). If a master key is stored on the client side, the client takes full responsibility for encryption. The advantage of this approach is that AWS never sees the encryption keys of the service.

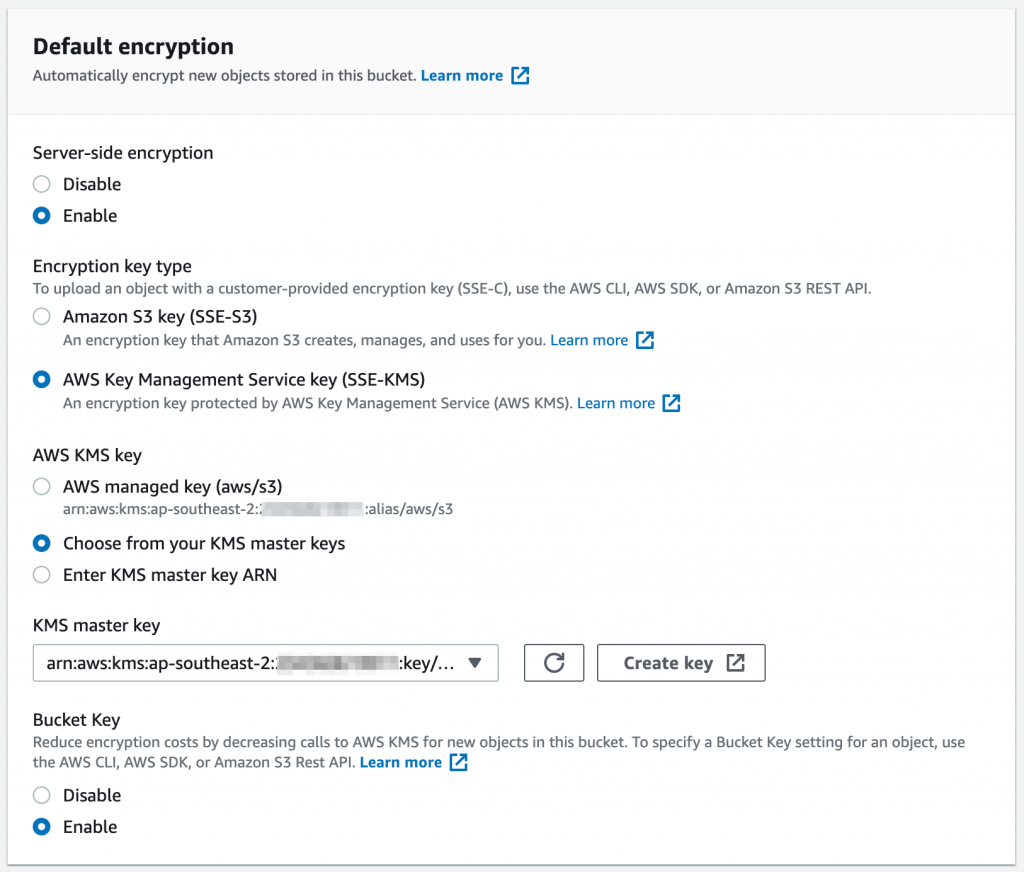

With server-side encryption, the data is encrypted by the S3 service before being written to storage. All encryption operations are performed in the AWS Cloud. When you download from the S3 bucket, AWS decrypts the data in the AWS Cloud and sends the unencrypted data to you. This process is transparent for end-users. There are three options for server-side encryption.

- SSE-S3 is the simplest method. The keys are managed and handled by AWS to encrypt your data;

- SSE-KMS uses a KMS key to encrypt S3 data on the AWS side. You manage the key in AWS KMS. The advantages of using the SSE-KMS encryption type are control over the key policy and an audit trail of key usage and management;

- Finally, there is SSE-C where you provide the encryption key. AWS never stores this key. The key is passed to AWS with each request when performing data encryption or decryption. You need to manage and protect the key.

Choosing an encryption method

Wow! That’s a lot of options. So which should I use? The following questions will help you decide which encryption method is appropriate for your data set. Remember that you probably don’t have a one-size fits all approach to your data.

- Who can encrypt and decrypt the data?

- Do you have the capability to securely store the secret key?

- Do you want to manage the secret key?

In general, for ultra-sensitive data, you might decide to use client-side encryption. However, it is more difficult to manage as you now need to manage your keys and perform the encryption locally. As a general approach, we recommend the use of SSE-KMS for all buckets. From a cost perspective, combine this with the use of a per-bucket key unless you have the need for a per-object key.

What ever method you choose, remember to only use HTTPS to access the S3 bucket.

You can set encryption on a new bucket. You can also enable it for existing buckets so that all new objects will be encrypted. Note that if you do add encryption to an existing bucket, you’ll need to make sure that any user or role that accesses the bucket is allowed to use the KMS key. To read objects, add the following to the IAM policy.

kms:Decrypt

If you need to need to both read and write objects, add the following IAM policy.

kms:Decrypt kms:GenerateDatakey

EFS

EFS is AWS’s network file system (NFS) compatible network storage. It is suited for EC2, ECS and Lambda based compute.

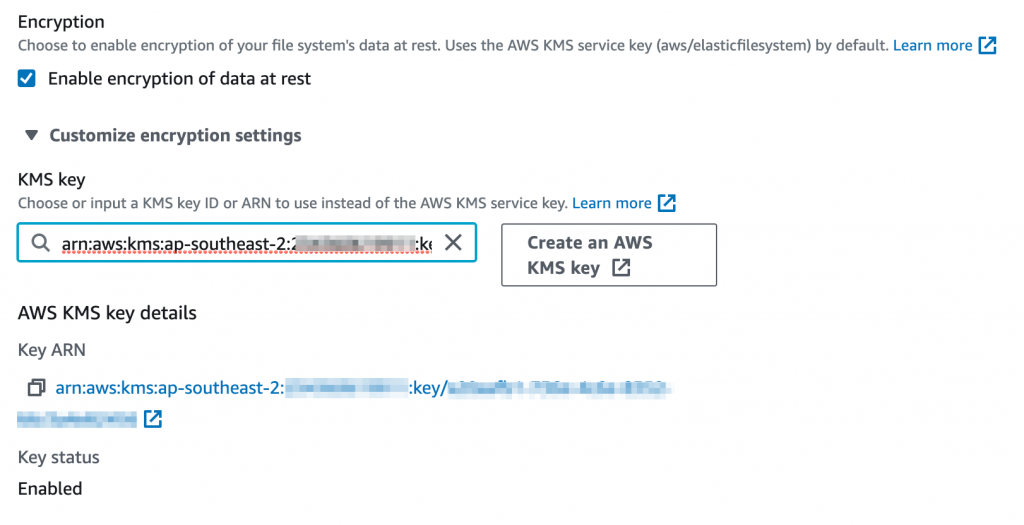

Encryption of EFS volumes is enabled by default at creation time. However, this uses your default AWS managed KMS key for EFS. If you want to a customer managed KMS key, you need to select Customize and then expand the Customize encryption settings to select the KMS key.

FSx

Finally, we look at Amazon FSx for Windows in relation to Cloud storage. It is the SMB equivalent of Amazon EFS. It provides network storage for Windows based environments and integrates to Microsoft AD. Similar to EFS, encryption is enabled by default using your default AWS managed KMS key for FSx. At creation time, you should change this to use a customer managed KMS key.

Managing access to Cloud storage

Now you’ve ensured that you are encrypting your storage, how do you manage access to it? For some services, this is relatively simple. For other, however, there’s a little more to it! Note that we aren’t covering network access here as that will be covered in a later post (hint, AWS PrivateLink and VPC Endpoints are your friend!)

S3

First let’s look at S3 as it’s a little different.

Public access to buckets

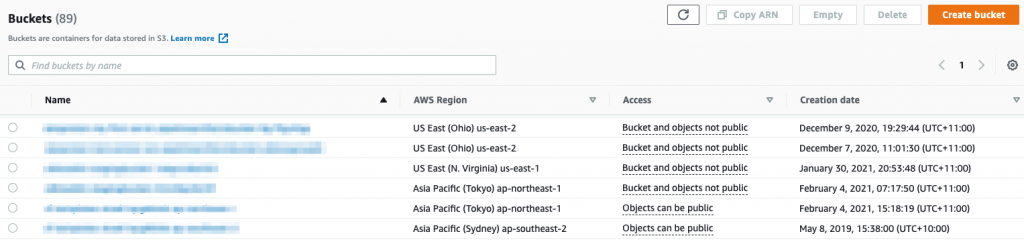

S3 is often used to deliver public content, sometimes unintentionally! It may be used as the source of static content for a website or behind the Amazon CloudFront content delivery network. However, the vast majority of your buckets will most likely not need to be publicly accessible. How do I make sure that is the case? For starters, the bucket list in the S3 console will show buckets with public access or buckets that can be configured for public access.

By default, new buckets and objects don’t allow public access. However, you can modify bucket policies or object permissions to allow public access. S3 Block Public Access is a way to prevent the configuration of any public access to data within S3 buckets, overwriting bucket policies and permissions. It is available in the S3 console and we recommend enforcing blocking of public access. If you do need public access to an S3 bucket, create a seperate account and manage it there.

Managing access within AWS

Bucket policies are similar to IAM policies (and key policies) in that they are used to control access to the bucket – who can do what to a bucket. Bucket policies are applied at the bucket, not object, level. IAM polices can also be used to control access to S3 buckets. The advantage of IAM policies is that you can centrally manage permissions for all AWS services. However, bucket policies also allow you to provide cross-account access to a bucket (access from another AWS account). It can be tricky to manage when using both. We recommend using bucket policies for cross-account or highly granular control (around access protocols for example) and IAM for general access management for S3.

S3 buckets can also use ACLs but these are really a legacy control mechanism. For new buckets, always use a bucket policy over an ACL. The main use case for an ACL is that they can be applied at an object level within a bucket.

So what happens if I have IAM policies, S3 bucket policies and S3 ACLs? Following the principle of least privilege, all policies will be evaluated. Without an explicit allow access will be denied. If any of these protection mechanisms has an explicit deny, then access will be denied.

Managing access at scale

Finally, Amazon S3 Access Points simplifies managing access to S3 for different business use cases. Access Points can be used to implement varying levels of access to a shared bucket (for example a data lake) without having to manage a highly complex bucket policy. An S3 Access Point is a network endpoint that you attach to a bucket. Each access point enforces an access point policy that works in conjunction with the bucket policy applied to the underlying bucket. End users or services then use independent access points and policies to access the same bucket. You can configure an access point to accept requests only from a VPC. If required, you can also define custom block public access settings for an access point.

Sharing EBS volumes

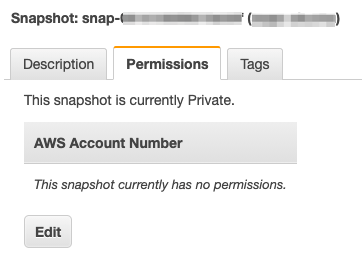

EBS volumes are an Availability Zone level construct. Snapshots (and AMIs) created from a volume can be used across a Region within an account (and also copied to other regions). For many use cases, this is adequate.

However, for added protection for your Cloud storage, you might want to copy snapshots or AMIs between accounts. It’s very simple to share snapshots. As a result, you want to closely monitor that access. After all, it’s an easy way to extract data out of your environment! Fortunately, you can see any sharing of snapshots and AMIs in the console. Note that for encrypted volumes, you will also need to share the KMS key to be able to decrypt the volume in the destination account.

You should also adopt this approach for RDS snapshots.

Network storage

By definition, network storage needs to be accessible over the network! Both EFS and FSx for Windows volumes present as virtual network interfaces (ENI) in your VPC. In order to manage the access, create a dedicated Security Group for the volume and assign access from resources or networks by creating security group rules. Note that if you create a network volume across multiple AZs (which is recommended), you can set a different security group for each of the AZs.

For EFS, you can also define a File system policy. In the policy you can define whether access is read-only or read write, enforce encryption in transit and block public access, for example.

Wrapping up

The AWS Cloud offers a rich set of services for your Cloud storage. As a result, there are lots of things to consider when managing and securing your data. We’ve only looked at some of the keys areas to focus on and there and many more options to consider!

If you would like to dive deeper into securing your Cloud storage, please get in contact with us at RedBear. Look out for our next instalment in our AWS Security Foundations series next week!